From the user's perspective, Beat Sync feels like magic — drop in a song, get back a beat-perfect edit. From an engineering perspective, it's a careful chain of audio analysis and visual orchestration that took Kaiber's team years to refine through real artist projects with Yaeji, Boiler Room, Praying, Jon Rafman, Grimes, Chief Keef and others. Here's the breakdown.

Step 1: BPM detection & structural analysis

The moment you upload an audio file, Cuts runs it through a BPM-detection pass. This isn't just counting beats per minute — it's identifying the underlying rhythmic grid of the song, locating the downbeats, mapping where verses give way to choruses, and flagging dynamic transitions like drops, builds and breakdowns. Modern AI BPM detection is remarkably accurate; most modern dance and pop tracks land within 1 BPM of their actual tempo on the first pass.

Step 2: Stem separation via Audioshake

Cuts then routes the track through Audioshake — the same stem-separation engine that powers Canvas's audioreactivity. Audioshake splits the audio into its constituent layers: vocals, drums, bass and other instruments. This separation matters because not every visual change should hit on every beat. Cuts can choose to land cuts on snare hits but caption changes on vocal phrases, or shift visual energy on the bass while keeping color steady on the harmonic content.

Step 3: Cut-point planning

With BPM and stems in hand, Cuts plans where every visual transition will land. It uses the structural analysis from step one — identifying that 0:45 is the start of the first chorus, 1:30 is the bridge, 2:15 is the drop — and the rhythmic data from step two to choose cut points that feel intentional, not random. The result is an edit that breathes with the song instead of just blinking on every quarter note.

Step 4: Visual orchestration

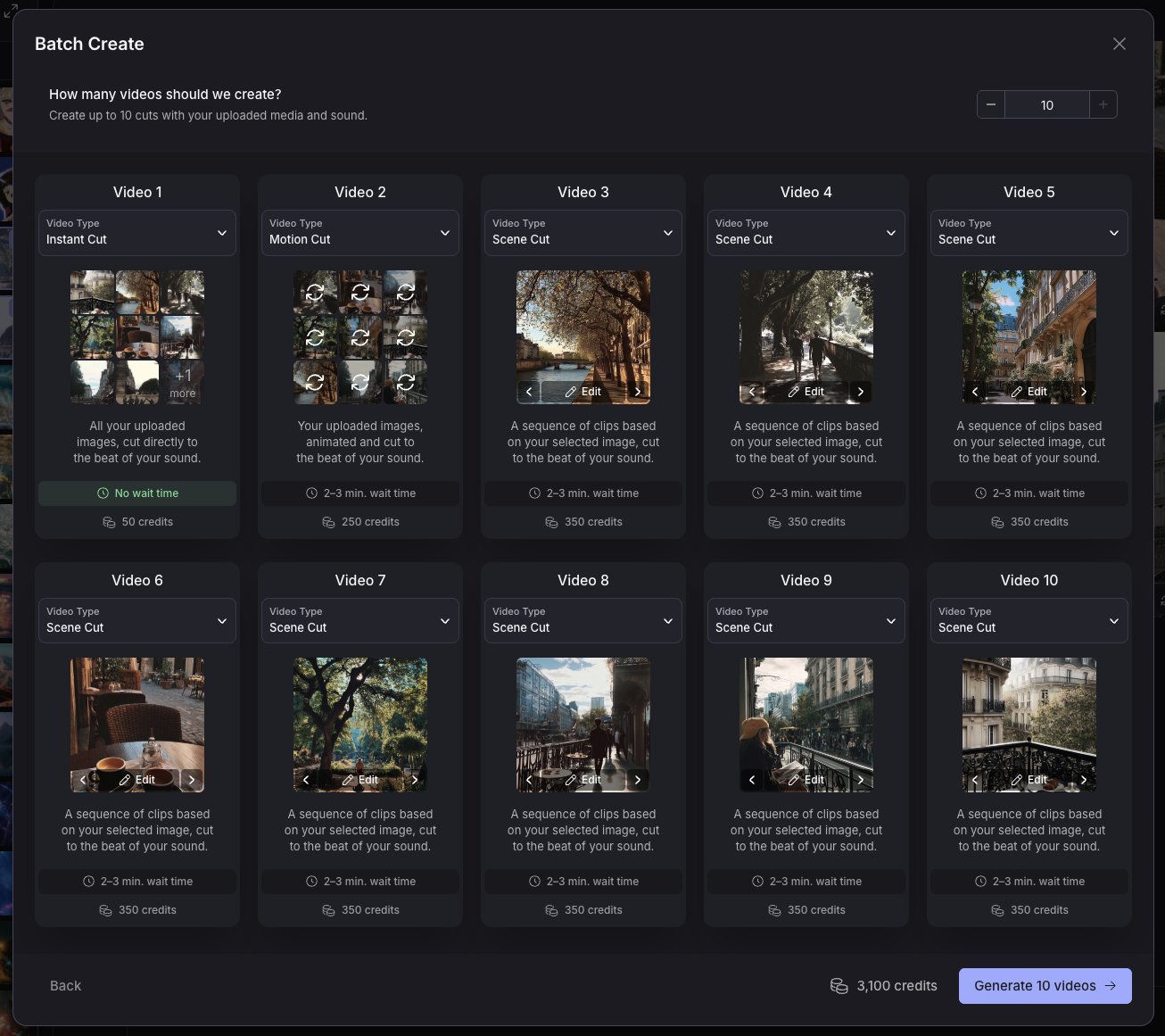

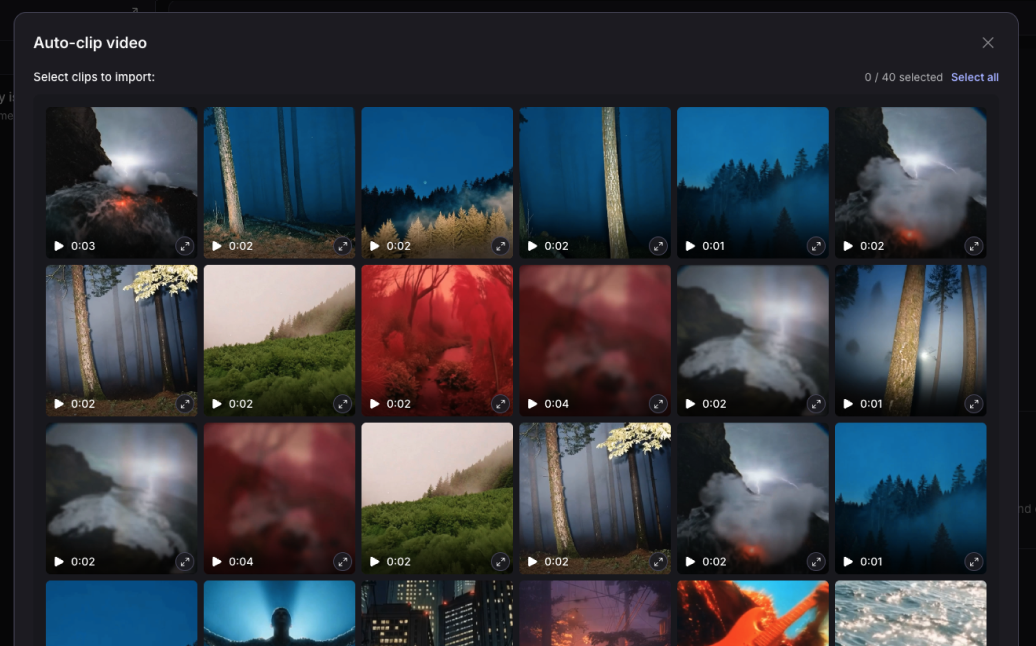

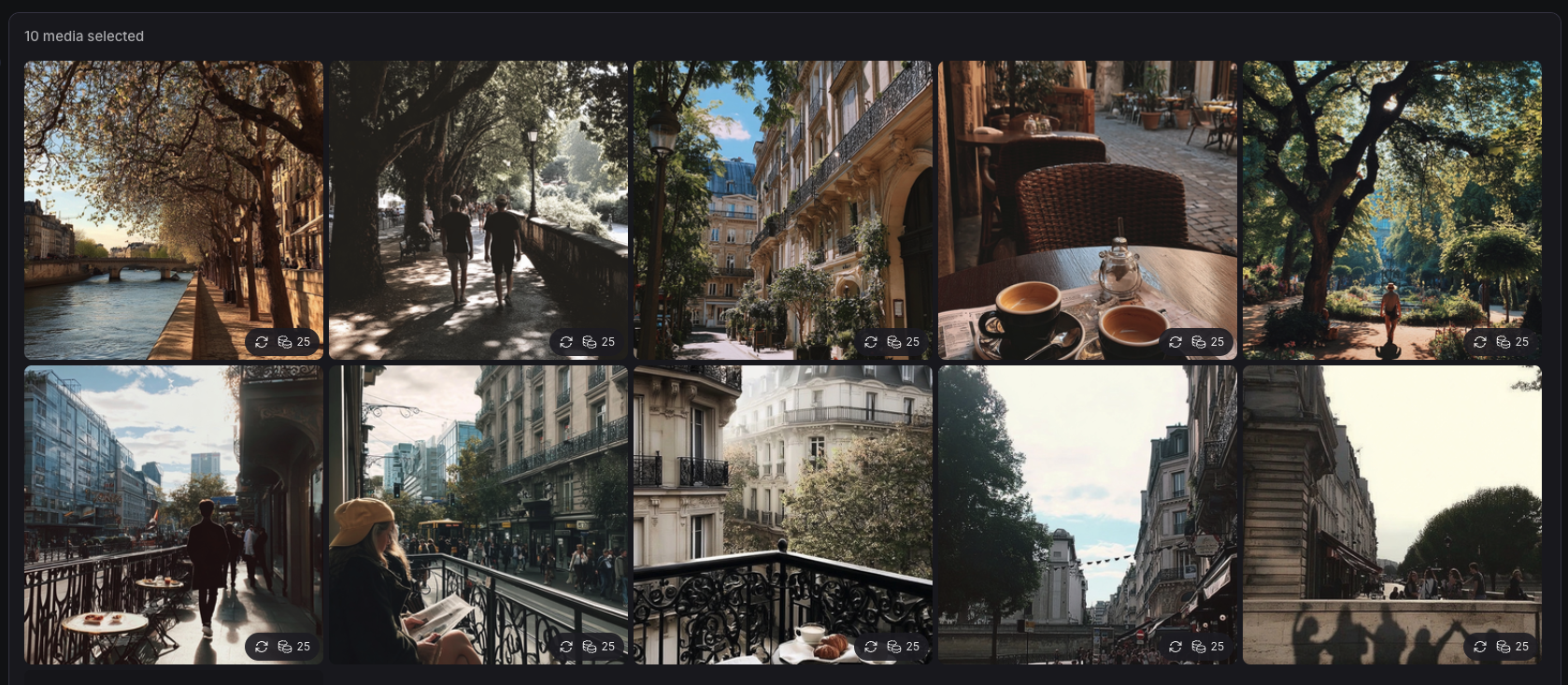

Now Cuts pulls in your uploaded visuals — videos, images, or both — and assigns them to the cut points. The order is the order you uploaded them in (so you have control over narrative if you want it), but Cuts decides how long each clip plays, how it transitions, how it crops, and how it stretches or compresses to fit the beat grid. Different templates apply different orchestration logic; we'll cover that in the next section.

Step 5: Caption timing

If your song has lyrics or vocal phrases, Cuts can auto-generate captions that snap to the nearest kick — Kaiber's signature touch. The caption appears slightly before the vocal lands and disappears on the next strong beat. The result is the kinetic typography style you've seen on every viral lyric video — except you didn't have to manually time anything.

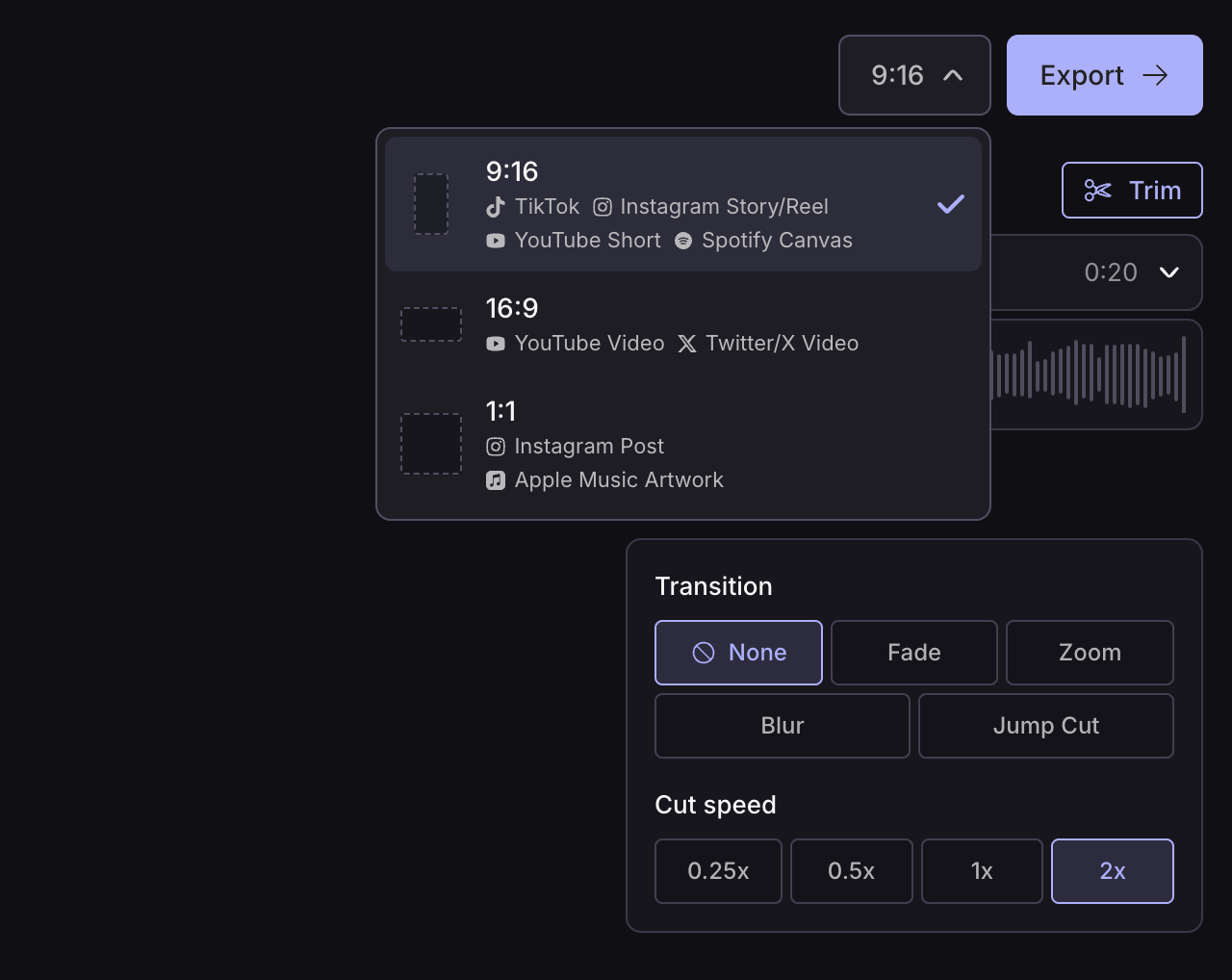

Step 6: Multi-aspect render

Finally, Cuts renders the finished edit in multiple aspect ratios in a single pass. 9:16 for TikTok, Instagram Reels and YouTube Shorts. 1:1 for Instagram feed. 16:9 for YouTube. Cuts crops intelligently — keeping faces and motion-centers in frame as the aspect ratio changes — instead of just letterboxing.

The result

You uploaded a song and a folder of clips. You got back ten polished, beat-synced, captioned, multi-aspect-ratio music videos in about five minutes. None of which you touched a timeline for.